Halftone QR Codes

Posted 12/19/17

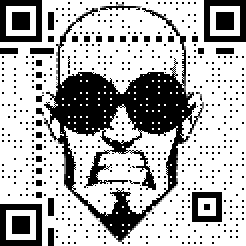

I recently encountered a very neat encoding technique for embedding images into Quick Response Codes, like so:

A full research paper on the topic can be found here, but the core of the algorithm is actually very simple:

-

Generate the QR code with the data you want

-

Dither the image you want to embed, creating a black and white approximation at the appropriate size

-

Triple the size of the QR code, such that each QR block is now represented by a grid of 9 pixels

-

Set the 9 pixels to values from the dithered image

-

Set the middle of the 9 pixels to whatever the color of the QR block was supposed to be

-

Redraw the required control blocks on top in full detail, to make sure scanners identify the presence of the code

That’s it! Setting the middle pixel of each cluster of 9 generally lets QR readers get the correct value for the block, and gives you 8 pixels to represent an image with. Occasionally a block will be misread, but the QR standard includes lots of redundant checksumming blocks to repair damage automatically, so the correct data will almost always be recoverable.

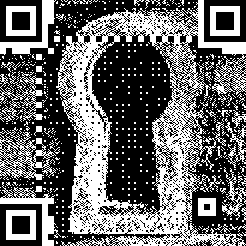

There is a reference implementation in JavaScript of the algorithm I’ve described. I have extended that code so that when a pixel on the original image is transparent the corresponding pixel of the final image is filled in with QR block data instead of dither data. The result is that the original QR code “bleeds in” to any space unused by the image, so you get this:

Instead of this:

This both makes the code scan more reliably and makes it more visually apparent to a casual observer that they are looking at a QR code.

The original researchers take this approach several steps further, and repeatedly perturb the dithered image to get a result that both looks better and scans more reliably. They also create an “importance matrix” to help determine which features of the image are most critical and should be prioritized in the QR rendering. Their code can be found here, but be warned that it’s a mess of C++ with Boost written for Microsoft’s Visual Studio on Windows, and I haven’t gotten it running. While their enhancements yield a marked improvement in image quality, I wish to forgo the tremendous complexity increase necessarily to implement them.