The Journalist/Academic Leaked Data Divide

Posted 3/13/2026

I am presently flying back from a workshop where academics, journalists, and NGOs discussed how to build collaborations between the three when studying leaked documents, and particularly when studying leaked financial data. Here I’m sharing some of my takeaways. There were many parties at this workshop, but under the Chatham House Rule I will not attribute anyone’s statements to a particular party, and I’ll phrase things mostly from my own organization’s perspective.

At Distributed Denial of Secrets we publish a great deal of leaked information in the public interest. But millions of dumped spreadsheets and emails and PDFs aren’t very useful without an expert extracting a narrative from them. We primarily work with journalists: sharing data with them, providing search engines (here and here for example) and analysis tools (like this, this, or this) to make analyzing leaked data easier, and when we have an established relationship with journalists, alerting them to releases on their beat or facilitating collaborations and embargoes between parties. In principle we would like to work with academic researchers, too, in hopes they extract different insights from the same data. Many academics reach out to us for data access with initial excitement. However, aside from a few notable success stories, we have seen very few academic publications from our data. So where are academic studies breaking down?

Academic Value

First, why work with academics? Well, there’s complementary skill sets: journalists may be better equipped to read leaked memos and emails to piece together significant players in a company, while a computer or data scientist might be better equipped to understand a database dump or build timelines of financial transactions or run network analysis to find hubs and bridges in communication. Media groups increasingly have data journalists on staff to bridge this gap, but they remain far from ubiquitous.

But the bigger contribution is a difference in focus, which I’ll reductively refer to as depth versus breadth. Journalists are interested in a smoking gun, a memo that reveals a conspiracy and tells a clear story, and pursue those documents with great zeal in interviews and archival work. Academics are typically more interested in patterns of behavior, and so may be interested in the most banal documents that journalists pass over to paint a picture of what is normal in a data set.

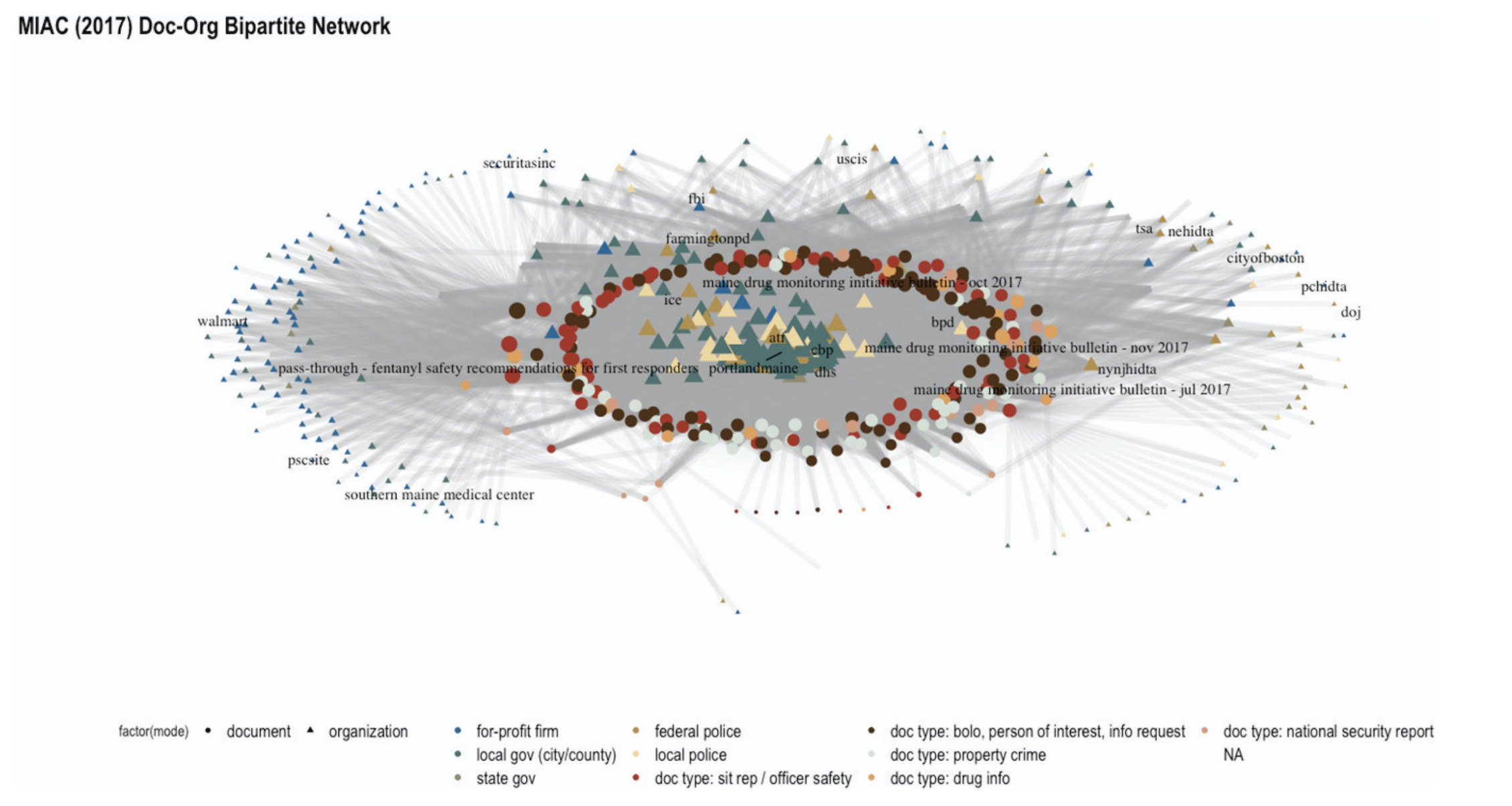

As one example, consider the 2019 publication of BlueLeaks. This was a hack of NetSential, the Google Drive-like service used by opaque cross-jurisdictional counter-terrorism fusion centers. The leak revealed internal memos, emails, training documents, and, for some fusion centers, access logs of what accounts created or read what files at what time. There were hundreds of media articles on individual memos that revealed racist and homophobic police training, data sharing agreements with Google, highly questionable phone record access, and much, much more. But the academic work on BlueLeaks instead focused on categorizing documents in the Boston and Maine fusion centers, demonstrating how over 80% of memos (and most of the highly accessed memos) were about local drug and property crime that was far outside the mandate of a counter-terrorism unit.

When this study was compiled with data from local activists into the MIAC shadow report and presented to the state legislature, they found this systemic argument compelling and passed a series of transparency laws aimed at reigning in their fusion center. The process was slow: the academic study itself took the better part of a year, and the legislature has been passing new iterations of their fusion center regulation from April 2021 through February of 2026. Nevertheless, media coverage of fusion center practices did not spark the same kind of legislative reform, so these systemic studies are a uniquely valuable lever that should not be overlooked.

The Exploration Problem

The idealized scientific method goes “develop a hypothesis, devise an experiment for testing the hypothesis, gather data needed to evaluate the test, run the test, evaluate results, reject or fail to reject hypothesis.”

Reality is much more complicated. Part way through examining data the hypotheses often evolve, and there’s some push-pull between questions driving the collection of data and the contents of data driving a refining of the questions.

When working with leaked data however, the process is inverted: we don’t know ahead of time what’s in the documents, our only choice is to fumble around for a while until we gain familiarity with the data set and what questions it might be able to answer. In some qualitative academic traditions, like ethnography and inductive coding, this kind of exploratory data analysis is ubiquitous. In quantitative fields, however, exploratory data analysis is frequently discouraged, because you can invest a lot of time exploring data without seeing any results.

There’s no way to totally sidestep this problem: working with leaked data requires exploration. However, there are a number of ways we can lower the barrier to entry, including aggregated existing media coverage of a dataset (see again the BlueLeaks entry at DDoSecrets), better categorization of data (such as clearly tagging our releases as “financial data”, “SQL dump”, to flag which researchers the release might be relevant to and what skills they’ll need to access it), and maybe summaries akin to machine learning’s model cards or more recent network cards.

The IRB Challenge

Journalists may run their stories past in-house legal counsel or ethics committees. Academics likewise submit their research proposals to a review board (the Institutional Review Board (IRB) in the United States, Ethics Review Boards (ERB) in Europe, Research Ethics Boards (REB) in Canada, similar idea in all cases) when their research contains human subjects. These boards have the dual purposes of following ethical standards regarding potential harm to subjects, and insulating the institute from legal risk.

I do not believe that ethical standards are different between academia and journalism. Responsible reporting balances the value of public knowledge and the risk to the people being reported on, and this is true whether the report is scientific or investigative journalism. However, there is a lot more precedence for journalists working with leaked data than academics, so getting IRB approval can be a difficult sell.

There are a few responses to this:

-

This challenge will fade on its own over time. As we conduct more data science studies of leaked documents there will be stronger precedence for this kind of work, more example ethics statements to draw from, and review boards more familiar with these types of studies.

-

We can learn from our colleagues in fields with very extensive ethical framework requirements, like medical researchers. We can carefully describe exactly how data will be used, what will be published, what will be withheld, and how this presents risks to people in the leaked documents and liability to researchers and the institute. We can draw from existing ethical standards at academic associations like the ACM or publications like the Associated Press. This should not be seen as tedious or a checkbox, but a responsible part of methodological design. If it requires several pages and its own literature review, so be it.

-

Not every study requires IRB approval. In particular, computer scientists are notorious for carefully defining “human subjects” and “implied consent” to make their studies IRB-exempt. This tradition comes from studying newspaper articles, where the written output of a human doesn’t qualify as a “human subject,” and publishing the articles in a paper of record implies that we have consent to read the articles. This argument is frequently extended to encompass studies of social media data, and it can be extended to studying leaked documents that have already been made public, especially if they contain limited human data. However, this is a risky choice: when an institute gives IRB approval to a study they not only confirm that they believe the study is ethical, but they agree to assume legal liability for the study should the researchers be sued. Forgoing IRB approval exposes researchers to potential legal risk instead.

From DDoSecrets’ perspective, we can streamline this process for researchers by compiling prior academic studies using our data and common arguments made to review boards – and we can collaborate with other like-minded institutions to build this resource.

Collaborative Differences

When we talk about getting journalists and academics to study leaked data we can be referring to two things:

-

Journalists studying data, followed by academics

-

Journalists studying data in collaboration with academics

The former is simpler: journalists explore a dataset, working quickly to publish stories before the news cycle moves on. Academics then descend on the data, using the media coverage as an initial map of what’s in the leaked documents, and working on their own typically slower schedule. Academics have some time pressure, too - they want to publish before they get scooped by other researchers, or may be on a schedule bound by grant funding or student availability by semester - but are typically under less time crunch than their journalistic counterparts.

True collaboration is more challenging. Different timelines, different skill sets, and most of all different epistemologies. Nevertheless, I’ve spoken with several teams that have successfully integrated researchers and journalists on projects. They reported that often this looks like two studies conducted in parallel, where the journalists cite the researchers as expert testimony, while the researchers can cite the media coverage in their literature review and discussion of results.

There is a tension in how both parties present methodology. As scientists, we are trained to be as precise as possible, detailing exactly how we take measurements and what caveats should be expected. This can be quite verbose, and impenetrable with technical details and math. By contrast, journalists are reporting to a more general audience, and want as concise and easily explainable a methodology as possible. This difference can prove frustrating, and journalists have been annoyed interviewing me when I choose words carefully and am cautious about what I state we can “know.” However, collaborating can provide a loophole: journalists can report on scientific conclusions, citing the researchers, and abstract away the methods from their audience.

Financial Collaboration

In any collaboration someone needs to foot the bill for both the journalists’ and academics’ time. Sometimes interests align and the academics and journalists have independent sources of funding, but we’ll put that perfect case aside. Academic grants rarely include funding for journalists (although I think this is an interesting avenue to pursue when funding agencies want to see impact and outreach – you get a lot more eyes on news coverage than academic papers), so we have more examples of journalists contracting with academics. This can take two main forms:

-

The journalists hire the academics as independent researchers. Academics frequently take consulting gigs with industry partners, so this is well-established practice. However, the academics will not be working in their capacity with the university, and so will not have access to university resources such as supercomputing time or journal access.

-

The journalists contract with the university for support. Now the academics will be working for the journalists as part of their day job, and therefore have access to all the university’s resources. However, the university is also likely to take significant overhead costs, much like with research grants.

It may be possible to combine both of the above. For example, the journalists pay for the researcher’s time as a contractor, but the researcher pays for supercomputing time from a relevant grant. One option here is an outreach budget - especially in Europe, labs are often supported by broad grants covering many researchers, which sometimes have a section dedicated to public outreach. Working with journalists to put a study in the eyes of the public can be justified under this umbrella, and so supercomputing time to further such a project can be framed as a component of outreach, tapping an otherwise underutilized portion of the budget.

Closing Thoughts

Leaked data provides unprecedented insights into organizations and individuals, and can be invaluable for journalistic and academic studies. Because of the volume and variety of data, a wide range of skills are needed to make sense of it. Therefore, we are likely to see increasingly diverse research teams, blurring the lines between disciplines to pursue truth as effectively as possible. These collaborations will be challenging at first, crossing a number of methodological and knowledge-producing boundaries, but I am confidently these hurdles can and will be overcome.